Introduction: The Promise of On-Device Generative AI for Raspberry Pi

The advent of generative AI has sparked a revolution, promising intelligent capabilities far beyond traditional computing. For the Raspberry Pi ecosystem, this leap takes a tangible form with the introduction of the AI HAT+ 2. This add-on board signals a significant, yet inherently complex, push towards bringing advanced AI workloads directly to the edge. It tantalizes with the prospect of running sophisticated large language models (LLMs) and vision-language models (VLMs) on a platform renowned for its accessibility and compact footprint. However, the true value and practical application of such advanced capabilities on a constrained device like the Raspberry Pi raise crucial questions that demand meticulous investigation and a data-backed roadmap. As we delve into its architecture and performance, we must temper excitement with a clear understanding of the trade-offs involved in real-world deployment.

At a Glance: What You Need to Know About the AI HAT+ 2

- Powered by the Hailo-10H AI accelerator, delivering 40 TOPS (INT4) inferencing performance.

- Features 8GB of dedicated on-board RAM, crucial for running LLMs and VLMs locally.

- Primarily designed for Generative AI workloads, a shift from the previous AI HAT+’s vision-centric focus.

- Connects via Raspberry Pi 5’s PCIe interface, ensuring high-bandwidth communication.

- Community feedback highlights concerns about proprietary software, limiting model flexibility compared to more open alternatives.

- Real-world LLM performance may not always surpass the Pi 5’s CPU, but excels in offloading and low-power scenarios.

- Ideal for privacy-critical, low-latency edge applications like robotics and secure monitoring, rather than broad, cloud-scale LLM tasks.

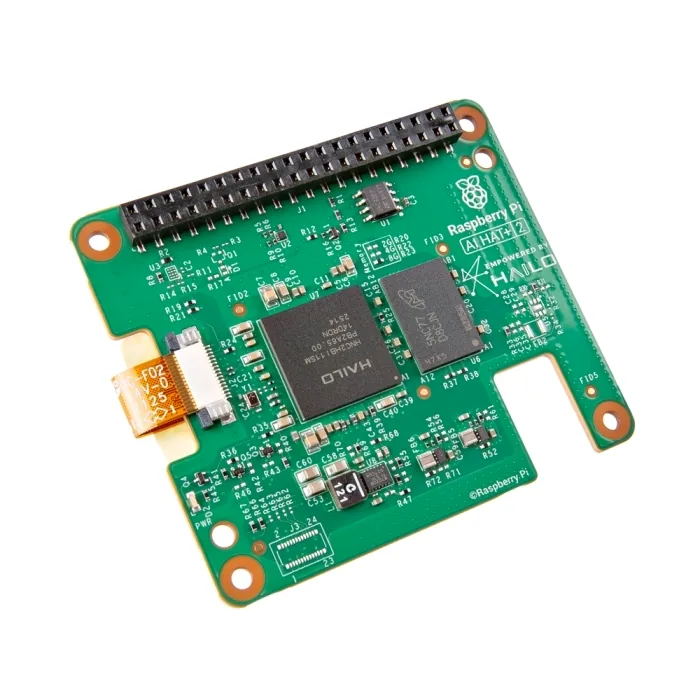

Hardware Dissected: The Raspberry Pi AI HAT+ 2 in Detail

At the core of the Raspberry Pi AI HAT+ 2 lies the Hailo-10H AI accelerator, a potent neural processing unit (NPU) capable of delivering up to 40 Tera Operations Per Second (TOPS) of INT4 inferencing performance. This raw computational power is complemented by a critical addition: 8GB of dedicated LPDDR4X RAM directly on the HAT. This onboard memory is paramount for hosting the parameters of larger language and vision models, freeing the Raspberry Pi 5’s main CPU and system RAM to handle other essential operating system and application tasks. The entire module interfaces with the Raspberry Pi 5 via its PCI Express (PCIe) connection, ensuring a high-bandwidth pathway for efficient data transfer between the host and the accelerator. This integrated design aims to create a cohesive and powerful edge AI solution, specifically engineered to manage the demanding memory and computational requirements of generative AI models.

Raspberry Pi AI HAT+ 2: Technical Specifications

| AI Accelerator | Hailo-10H NPU |

|---|---|

| Performance (INT4) | Up to 40 TOPS (Tera Operations Per Second) |

| On-board RAM | 8GB LPDDR4X (dedicated) |

| Interface | PCI Express (PCIe) for Raspberry Pi 5 |

| Compatibility | Raspberry Pi 5 only (requires updated Raspberry Pi OS 64-bit ‘Trixie’) |

| Power Consumption | ~2.5W (typical, workload-dependent) |

| Operating Temperature | 0°C to 50°C (ambient) |

| Cooling | Optional heatsink included (recommended for sustained workloads) |

| Software Integration | Raspberry Pi OS auto-detection, rpicam-apps camera stack, Hailo GenAI Model Zoo, hailo-ollama server |

| Dimensions | HAT+ specification compliant |

| Production Lifetime | Until at least January 2036 |

The Generative AI Leap: Why the ‘2’ Matters

The numerical increment in the AI HAT+ 2 signifies more than just an iteration; it represents a fundamental shift in design philosophy. While the original AI HAT+ was a capable accelerator primarily focused on traditional computer vision tasks like object detection and segmentation, its successor is meticulously engineered for the demanding world of generative AI. The Hailo-10H’s 40 TOPS of inferencing power, coupled with its crucial 8GB of dedicated LPDDR4X RAM, is the key differentiator. This substantial onboard memory allows the AI HAT+ 2 to load and execute large language models (LLMs) and vision-language models (VLMs) entirely on the card, circumventing the need to constantly shuttle data to and from the Raspberry Pi 5’s main system RAM. This not only prevents bottlenecks but also liberates the Pi 5’s CPU and memory, ensuring they remain fully available for the operating system and other applications. This dedicated resource allocation is precisely what enables the board to run heavier, more complex generative models locally, a feat previously challenging or impossible on earlier Raspberry Pi AI solutions.

AI HAT+ vs. AI HAT+ 2: A Generational Shift

| Feature | Original Raspberry Pi AI HAT+ | Raspberry Pi AI HAT+ 2 |

|---|---|---|

| AI Accelerator Chip | Hailo-8L / Hailo-8 | Hailo-10H |

| Performance (TOPS INT4) | 13 TOPS / 26 TOPS | 40 TOPS |

| On-board RAM | None specified (relied on Pi’s system RAM) | 8GB LPDDR4X dedicated |

| Primary AI Focus | Computer Vision (object detection, segmentation) | Generative AI (LLMs, VLMs) & Advanced Computer Vision |

| LLM Support | Limited/Not primary | Optimized for smaller LLMs/VLMs locally |

| PCIe Interface | Yes (for Pi 5) | Yes (for Pi 5) |

| Price (Approx.) | $70 – $110 | $130 |

Performance Unveiled: Benchmarks, Realities, and Limitations

Jeff Geerling critically evaluates local LLMs on Raspberry Pi 5, comparing the Hailo HAT+ 2 to an N100 mini PC.

The headline figure of ’40 TOPS’ for the AI HAT+ 2, specifically for INT4 inferencing, is undoubtedly impressive. However, our scientific approach at LoadSyn necessitates a deeper look into how this theoretical maximum translates to real-world performance. The actual speed you’ll experience is highly dependent on factors like model architecture, quantization levels, and the efficiency of the software pipelines feeding the NPU. Crucially, while the AI HAT+ 2 excels at offloading AI tasks from the Raspberry Pi 5’s CPU and system RAM, freeing these resources for other operations, direct comparisons of raw LLM inference speed (tokens/s) can be nuanced. For certain models, the Hailo-10H might not always deliver significantly higher tokens per second than the Pi 5’s powerful Broadcom BCM2712 CPU alone. Where the HAT+ 2 truly shines, however, is in its ability to offer a potentially faster Time to First Token (TTFT), which is vital for interactive applications, even if this metric can be challenging to benchmark directly with currently available tools.

The PCIe Bottleneck: A Hidden Constraint?

Despite the Hailo-10H supporting PCIe x4, the Raspberry Pi 5’s external HAT connector is physically limited to a single PCIe x1 lane (Gen 3). This bandwidth constraint can limit the real-world throughput of the AI HAT+ 2, especially for data-intensive workloads, preventing it from reaching its theoretical maximum performance. Users trying to achieve 40 TOPS have noted this significant bottleneck.

Expanding on the performance reality, sources like CNX Software have observed that while the AI HAT+ 2 effectively offloads the CPU and significantly reduces power consumption for AI tasks—a major benefit for battery-powered or resource-constrained applications—the raw LLM inference speed (tokens/s) may not always drastically outpace the Raspberry Pi 5’s CPU for all models. This is a critical point for users expecting a pure speed boost across the board. The real value often lies in the efficiency gains, allowing the Pi 5’s CPU to remain responsive for other critical operations, and in the promise of a lower Time to First Token (TTFT), which enhances user experience in interactive scenarios. However, reliable, direct benchmarking of TTFT with current tools remains a challenge, making it difficult to quantify this advantage precisely.

Ideal Use Cases: Where the AI HAT+ 2 Truly Shines

- Vision-Language Models (VLMs): Excels in tasks combining image processing with natural language, such as object detection, scene analysis, captioning, and smart search for home security, industrial QA, or robotics. The high-compute image encoder stage of VLMs maps naturally to the AI HAT+ 2’s strengths, enabling event triggering and meaningful summarization at the edge.

- Local Voice-to-Action Agents: Enables privacy-preserving voice control and command processing for devices, smart home automation, and human-machine interaction. This is especially potent where continuous input processing (large prefill) and short, low-bandwidth responses are needed, offering natural, menu-free control without cloud dependency.

- Advanced Computer Vision: Offers significant performance gains for large Convolutional Neural Networks (CNNs) and Transformer-based vision models (e.g., CLIP, zero-shot detection). This allows for richer perception and multi-stage vision pipelines (detection, embedding, semantic matching, reasoning) entirely at the edge, unlocking more capable and responsive applications in security and robotics.

- Offline Process Control & Secure Data Analysis: Ideal for environments without internet connectivity or where data privacy is paramount, such as air-gapped industrial monitoring or secure facility management. It enables local summarization of logs and sensor data, ensuring sensitive information never leaves the device.

- CPU Offloading in Robotics & GPIO Projects: Frees the Raspberry Pi 5’s CPU to handle other critical tasks while the AI HAT+ 2 manages inference. This parallel processing capability is crucial for responsive, complex robotic systems where the main CPU needs to remain available and responsive for real-time control and interaction.

The Ecosystem Lock-In: Software and Community Concerns

“Don’t do it, it uses non standard Ollama and .HEF file. You will not be able to do custom standardize AI tuning. Rather save your money and get a MAC Mini instead.”

— Community Comment

While the hardware capabilities of the AI HAT+ 2 are compelling, the software ecosystem presents a significant point of contention for many users. Raspberry Pi and Hailo provide a curated software stack that includes the Hailo GenAI Model Zoo and the hailo-ollama server. This approach offers a streamlined, plug-and-play experience for supported models, but it comes at the cost of flexibility. Models must be compiled into Hailo Executable Format (.HEF) files, which inherently limits users who wish to run arbitrary, open-source GGUF models or engage in custom, standardized AI tuning workflows. This proprietary nature can lead to frustration, as it creates a less open environment compared to other platforms where users have greater freedom to experiment with a wider array of models and toolchains. The sentiment of being ‘locked in’ to a less capable ecosystem is a recurring emotional hotspot within the community, directly impacting the perceived value proposition.

- Hailo-ollama Server: A customized Ollama backend tailored for Hailo hardware, exposing a local REST API for model interaction. While functional, its specialized nature means it doesn’t offer the same broad compatibility as a standard Ollama server.

- Hailo GenAI Model Zoo: A curated collection of Hailo-supported models, including DeepSeek-R1-Distill, Llama 3.2, and Qwen2.5-Coder. This provides an immediate starting point but limits choices to what Hailo has optimized and made available.

- Proprietary .HEF Files: Models must be compiled into Hailo Executable Format (.HEF), which is a significant barrier for users accustomed to widely adopted, open formats like GGUF. This restricts direct compatibility and flexibility for custom tuning or porting models from other ecosystems.

- Software Maturity Challenges: Early adopters have reported issues with setup, driver compatibility (particularly when using PCIe switches), and integrating the HAT into established open-source projects like Frigate, indicating a need for ongoing refinement.

- Open WebUI: Raspberry Pi recommends running Open WebUI, a popular frontend for LLMs, within Docker containers. This is due to incompatibility with the system Python (Python 3.13) shipped with Raspberry Pi OS ‘Trixie,’ adding an extra layer of complexity for some users.

Despite these challenges, the community’s curiosity and hope for the AI HAT+ 2’s potential remain strong, particularly concerning its integration into popular open-source projects. There are ongoing, active discussions and development efforts focused on bringing Hailo-10H support to platforms like Frigate NVR and Home Assistant. Developers from Hailo themselves are engaging with the community, addressing technical hurdles such as runtime version conflicts and compatibility issues when the HAT is placed behind PCIe switches. These collaborative efforts, though facing complexities, demonstrate a clear path towards overcoming initial integration barriers, offering a hopeful outlook for users eager to leverage the AI HAT+ 2 in their smart home and automation projects.

Value Proposition & Alternatives: Is the AI HAT+ 2 for You?

Pros

- Dedicated GenAI Acceleration: 40 TOPS (INT4) and 8GB dedicated RAM enable local LLM/VLM execution.

- CPU Offloading: Frees the Pi 5’s main CPU and RAM for other tasks, improving system responsiveness.

- Privacy & Low Latency: On-device processing ensures data privacy and deterministic performance.

- Seamless Camera Integration: Fully integrated with Raspberry Pi’s camera software stack.

- Long-Term Production: Commitment to production until at least January 2036.

- Cost-Effective Edge AI: Serious AI acceleration at a fraction of the cost of high-end systems.

Cons

- PCIe x1 Bottleneck: External FFC connector limited to a single PCIe lane, hindering full 40 TOPS potential.

- Proprietary Software Stack: Relies on Hailo’s toolchain and .HEF models, limiting flexibility.

- Limited LLM Scale: Supported LLMs are typically smaller (1-1.5B parameters).

- Setup Complexity: Challenges with driver installation and Docker workarounds for software like Open WebUI.

- Raw LLM Speed: Tokens/s performance may not drastically exceed the Pi 5’s CPU for all tasks.

- Raspberry Pi 5 Only: Not compatible with older models due to exclusive PCIe reliance.

Raspberry Pi AI HAT+ 2 vs. Key Alternatives for Local AI

| Feature | Pi 5 + AI HAT+ 2 | NVIDIA Jetson Orin Nano | Mac Mini M4 | Generic x86 Mini PC |

|---|---|---|---|---|

| Price (Approx.) | ~€225 (Total) | ~€399 | ~€650+ | ~€150-400 |

| AI Compute | 40 TOPS (NPU) | 67 TOPS (GPU) | ~38 TOPS (ANE) | CPU only |

| Dedicated AI RAM | 8GB on HAT | 8GB unified | 16-24GB unified | System RAM |

| Primary Strength | Edge GenAI / Offloading | GPU acceleration | Powerful Local Inference | Value / Flexibility |

| LLM Capability | 1-1.5B params | Quantized GPU models | Up to 13B models | Slow (CPU only) |

| Power (Avg) | 7-12W | 15W | 10-25W | 15-35W |

A look at various Raspberry Pi AI accelerators and an installed AI HAT+ 2, demonstrating the ecosystem of edge AI solutions.

The Edge AI Enigma: A Pragmatic Verdict on the AI HAT+ 2

The Raspberry Pi AI HAT+ 2 undeniably marks a significant milestone for on-device generative AI within the Raspberry Pi ecosystem. Its dedicated Hailo-10H NPU and 8GB of onboard RAM unlock capabilities for local LLMs and VLMs that were previously out of reach, particularly for specific edge cases where privacy, low latency, and CPU offloading are paramount. This makes it a powerful tool for applications like secure industrial monitoring, advanced robotics, and privacy-focused smart home automation. However, a pragmatic assessment requires tempering expectations. The Raspberry Pi 5’s PCIe x1 bottleneck means that the theoretical 40 TOPS may not always translate into a proportional increase in raw LLM performance (tokens/s) over the Pi 5’s CPU, though the benefits of offloading and improved Time to First Token remain valuable. Furthermore, the proprietary software stack, relying on Hailo’s toolchain and .HEF files, introduces a degree of ecosystem lock-in that limits model flexibility and custom tuning compared to more open alternatives. Ultimately, the value of the AI HAT+ 2 is highly dependent on your specific project requirements and your willingness to operate within its curated environment. It is not a universal solution for all generative AI ambitions, but for the right niche—demanding efficient, private, and low-latency edge AI within a supported pipeline—it stands as a remarkably capable and cost-effective accelerator.

Frequently Asked Questions

It’s an add-on board for the Raspberry Pi 5, featuring a Hailo-10H AI accelerator with 40 TOPS (INT4) performance and 8GB of dedicated RAM. It’s designed to bring generative AI capabilities like running LLMs and VLMs directly to the edge on a Raspberry Pi.

No, it cannot run models on the scale of ChatGPT. It is designed for smaller LLMs (typically 1-1.5 billion parameters) that are optimized for edge devices with limited memory and power.

The AI HAT+ 2 offers significantly higher generative AI performance (40 TOPS vs. 13/26 TOPS) and includes 8GB of dedicated RAM, making it suitable for LLMs and VLMs. The original was primarily focused on computer vision tasks.

While the Hailo-10H is rated for 40 TOPS, the Raspberry Pi 5’s external HAT connector is limited to a single PCIe x1 lane. This bandwidth bottleneck can restrict real-world performance for data-intensive workloads.

The AI HAT+ 2 relies on Hailo’s proprietary software stack and requires models to be compiled into .HEF files, which limits flexibility for users who prefer to run arbitrary open-source models (e.g., GGUF).